Contents

Computer vision is the discipline that enables machines to interpret visual data from the world. The core aim is to convert images and video into accurate, actionable information, such as identifying objects, tracking motion, or measuring shapes.

A well-designed system follows a pipeline:

capture

process

extract representations

make decisions

This article explains these core concepts in a clear, practical way and emphasizes how each step contributes to reliable outcomes in real applications.

By focusing on a single concept—the computer vision pipeline—practitioners can design, compare, and improve systems without getting lost in jargon. The approach remains practical, with a bias toward verifiable results and reusable components that fit real workflows.

Visual data begins with sensors that convert light into digital signals. Cameras, depth sensors, and lidar provide frames or point clouds that form the raw input.

Key ideas include resolution, color spaces, frame rate, and exposure. Preprocessing steps such as normalization, resizing, and denoising help standardize inputs for downstream tasks.

Calibration determines the camera’s intrinsic parameters, while extrinsic parameters describe scene geometry between sensors.

Understanding these basics helps anticipate limitations like motion blur or uneven lighting and informs deployment decisions.

Raw pixel data is high-dimensional and often redundant. The next step is to establish representations that capture meaningful information. Early approaches rely on classic features such as edges, corners, and texture descriptors.

Modern workflows frequently use learned representations, where neural networks extract hierarchical features useful for multiple tasks. A typical progression moves from low-level features to more abstract descriptors, enabling robust matching, segmentation, or recognition even under varying lighting and viewpoints.

This shift from pixels to compact representations is central to effective computer vision behavior.

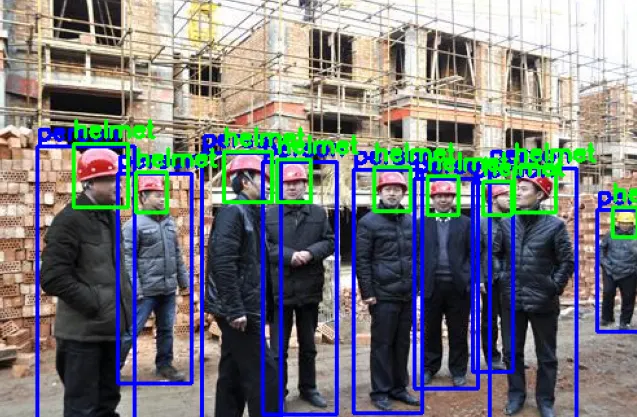

Decisions arise from models trained to map representations to outputs. In many contexts, this involves machine learning techniques that map features to labels, detections, or masks.

Supervised learning uses labeled data to train classifiers, detectors, or segmenters.

Loss functions quantify error and guide optimization that adjusts model parameters.

Transfer learning helps adapt to new tasks with less labeled data.

Inference then produces predictions, scores, or maps that inform downstream actions. Practical considerations include data quality, bias, and the need for robust performance across diverse environments.

Reliable vision systems require careful evaluation. Metrics such as accuracy, precision, recall, and the intersection-over-union score for segmentation quantify performance on representative data.

Cross-validation, held-out sets, and real-world testing help assess generalization. Deployment considerations span latency, resource use, and hardware constraints, from edge devices to cloud processing.

Finally, monitoring and updating models after deployment ensures continued reliability as conditions change, such as weather shifts or new camera setups.

For practitioners, a lightweight workflow translates theory into actionable steps. A typical pipeline reads an image, converts it to grayscale, and extracts edges as a simple demonstration.

Python programming example:

import cv2

img = cv2.imread('frame.jpg', cv2.IMREAD_GRAYSCALE)

edges = cv2.Canny(img, 50, 150)

cv2.imwrite('edges.png', edges)This sequence demonstrates preprocessing, feature extraction, and result storage in a reusable pattern. More complex tasks, such as object detection or segmentation, build on this foundation with additional models and data pipelines.

Understanding core concepts enables practical gains across industries. In manufacturing, automated inspection accelerates workflows, improves quality control, and contributes to digital productivity.

In logistics, vision-enabled tracking enhances inventory management and asset visibility. As methods evolve, practitioners should follow evidence-based developments, validate improvements on representative data, and maintain a bias-aware perspective.

The goal is to apply robust concepts to real problems, keeping implementations maintainable and transparent. For those following tech tutorials, the same pattern—define the task, choose representations, select a model, evaluate, and refine—guides progress.